Agile Requirements Core Practices

- Stakeholders actively participate

- Adopt inclusive models

- Take a breadth-first approach

- Model storm details just in time (JIT)

- Treat requirements like a prioritized stack

- Prefer executable requirements over static documentation

- Your goal is to implement requirements, not document them

- Recognize that you have a wide range of stakeholders

- Create platform independent requirements to a point

- Smaller is better

- Question traceability

- Explain the techniques

- Adopt stakeholder terminology

- Keep it fun

- Obtain management support

- Turn stakeholders into developers

1. Stakeholders Actively Participate

When you are requirements modeling the critical practice is Active Stakeholder Participation . There are two issues that need to be addressed to enable this practice – availability of stakeholders to provide requirements and their (and your) willingness to actively model together. My experience is that when a team doesn’t have adequate access to stakeholders that this is by choice. You have funding for your initiative, don’t you? That must have come from some form of stakeholder, so they clearly exist. Users must also exist, or at least potential users if you’re building a system you intend to provide to the public, so there clearly is someone that you could talk to. Yes, it may be difficult to find these people. Yes, it may be difficult to get them to participate. Deal with it. In Overcoming Common Requirements Challenges I discuss several common problems that development teams often face, including not having full access to stakeholders. My philosophy is that if your stakeholders are unable or unwilling to participate then that is a clear indication that your initiative does not have the internal support that it needs to succeed, therefore you should either address the problem or cancel to minimize your losses. Active Stakeholder Participation is a core practice of Agile Modeling (AM).

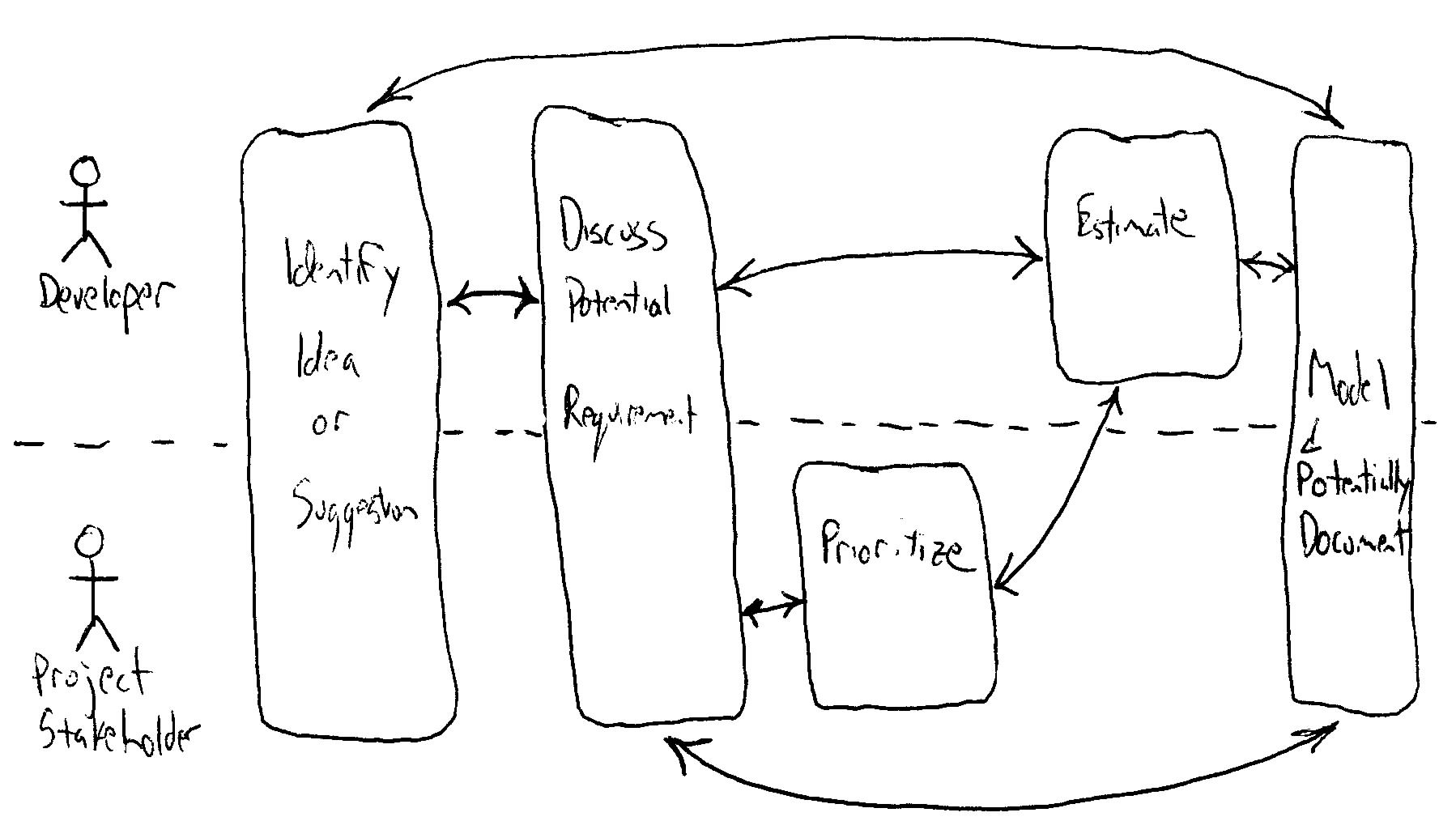

What does it mean for a stakeholder to actively participate? Figure 1 presents a high-level view of the requirements process, using the notation for UML activity diagrams, indicating the tasks that developers and stakeholders are involved with. The dashed line is used to separate the effort into swim lanes that indicate what role is responsible for each process. In this case you see that both stakeholders and developers are involved with identifying ideas or suggestions, discussing a potential requirement, and then modeling and potentially documenting it. stakeholders are solely responsible for prioritizing requirements, the system is being built for them therefore they are the ones that should set the priorities. Likewise, developers are responsible for estimating the effort to implement a requirement because they are the ones that will be doing the actual work – it isn’t fair, nor advisable, to impose external estimates on developers. Although prioritization and estimation of requirements is outside the scope of AM, it is however within the scope of the underlying process such as XP or UP that you are applying AM within, it is important to understand that these tasks are critical aspects of your overall requirements engineering effort. For further reading, see the Agile Requirements Change Management article.

Figure 1. A UML activity diagram overviewing the requirements engineering process.

My philosophy is that stakeholders should be involved with modeling and documenting their requirements, not only do they provide information but they actively do the work as well. Yes, this requires some training, mentoring, and coaching by developers but it is possible. I have seen stakeholders model and document their requirements quite effectively in small start-up firms, large corporations, and government agencies. I’ve seen it in the telecommunications industry, in the financial industry, in manufacturing, and in the military. Why is this important? Because your stakeholders are the requirements experts. They are the ones that know what they want and they can be taught how to model and document requirements if you choose to do so. This makes sense from an agile point of view because it distributes the modeling effort to more people.

2. Adopt Inclusive Models

To make it easier for stakeholders to be actively involved with requirements modeling and documentation, to reduce the barriers to entry in business parlance, you want to follow the practice Use the Simplest Tools. Many of the requirements artifacts listed in Table 1 below can be modeled using either simple or complex tools – a column is included to list a simple tool for each artifact. Figure 2 and Figure 3 present two inclusive models created using simple tools, Post It notes and flip chart paper was used to model the requirements for a screen/page in Figure 2 (an essential UI prototype) and index cards were used for conceptual modeling in Figure 3 (a CRC model). Whenever you bring technology into the requirements modeling effort, such as a drawing tool to create “clean” versions of use case diagrams or a full-fledged CASE tool, you make it harder for your stakeholders to participate because they now need to not only learn the modeling techniques but also the modeling tools. By keeping it simply you encourage participation and thus increase the chances of effective collaboration.

Figure 2. An essential user interface prototype.

Of course, the challenge with such models is their impermanence. When you’re a co-located or near-located team (everyone is pretty much in the same building) then it’s viable to have paper-based or whiteboard based models. When your agile team is geographically distributed, finds itself addressing a complex domain, or finds itself in a regulatory compliance situation which requires more permanent artifacts, then you will need to consider capturing key information in software-based modeling tools (SBMTs) as well.

3. Take a Breadth-First Approach

My experience is that it is better to paint a wide swath at first, to try to get a feel for the bigger picture, than it is to narrowly focus on one small aspect of your system. By taking a breadth-first approach you quickly gain an overall understanding of your system and can still dive into the details when appropriate.

Many organizations prefer a “big modeling up front (BMUF) ” approach to modeling where you invest significant time gathering and documenting requirements early in the initiative, review the requirements, accept and then baseline them before implementation commences. This sounds like a great idea, in theory, but the reality is that this approach is spectacularly ineffective. A 2001 study performed by M. Thomas in the U.K. of 1,027 projects showed that scope management related to attempting waterfall practices, including detailed, up-front requirements, was cited by 82 percent of failed projects as the number one cause of failure. This is backed up by other research – according to Jim Johnson of the Standish Group when requirements are specified early in the lifecycle that 80% of the functionality is relatively unwanted by the users. He reports that 45% of features are never used, 19% are rarely used, and 16% are sometimes used. Why does this happen? Two reasons:

- When stakeholders are told that they need to get all of their requirements down on paper early in the initiative, they desperately try to define as many potential requirements (things they might need but really aren’t sure about right now) as they can. They know if they don’t do it now then it will be too hard to get them added later because of the change management/prevention process which will be put in place once the requirements document is baselined.

- Things change between the time the requirements are defined and when the software is actually delivered.

The point is that you can do a little bit of initial, high-level requirements envisioning up front early in the initiative to understand the overall scope of your system without having to invest in mounds of documentation.

|

4. Model Storm Details Just In Time (JIT)

Requirements are identified throughout most of your initiative. Although the majority of your requirements efforts are performed at the beginning your initiative it is very likely that you will still be working them just before you final code freeze before deployment. Remember the principle Embrace Change. Agilists take an evolutionary, iterative and incremental, approach to development. The implication is that you need to gather requirements in exactly the same manner. Luckily AM includes practices such as Create Several Models in Parallel, Iterate To Another Artifact, and Model In Small Increments which enable evolutionary modeling.

|

5. Treat Requirements Like a Prioritized Stack

Figure 4 overviews the agile approach to managing requirements, reflecting both Extreme Programming (XP)’s planning game and the Scrum methodology. This approach is one of several supported by the Disciplined Agile (DA) tool kit. Your software development team has a stack of prioritized and estimated requirements which needs to be implemented – co-located agile teams will often literally have a stack of user stories written on index cards. The team takes the highest priority requirements from the top of the stack which they believe they can implement within the current iteration. Scrum suggests that you freeze the requirements for the current iteration to provide a level of stability for the developers. If you do this then any change to a requirement you’re currently implementing should be treated as just another new requirement.

Figure 4. Agile requirements change management process.

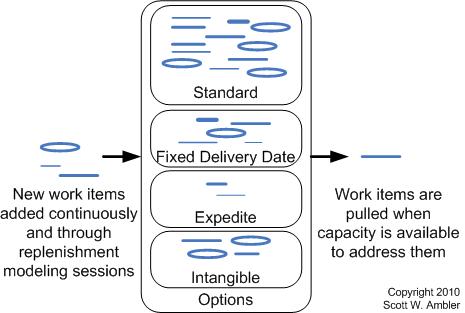

It’s interesting to note that lean teams are starting to take a requirements pool approach to managing requirements instead of a stack, as you see in Figure 5. This approach is also supported by the Disciplined Agile (DA) tool kit (unlike other agile frameworks DAD doesn’t prescribe a single way to manage work/requirements).

Figure 5. Lean work management process.

6. Prefer Executable Requirements Over Static Documentation

During development it is quite common to model storm for several minutes and then code, following common Agile practices such as Test-First Design (TFD) and refactoring, for several hours and even several days at a time to implement what you’ve just modeled. This is where your team will spend the majority of its time. Agile teams do the majority of their detailed modeling in the form of executable specifications, often customer tests or development tests. Why does this work? Because your model storming efforts enable you to think through larger, cross-entity issues whereas with TDD you think through very focused issues typically pertinent to a single entity at a time. With refactoring you evolve your design via small steps to ensure that your work remains of high quality.

TDD promotes confirmatory testing of your application code and detailed specification of that code. Customer tests, also called agile acceptance tests, can be thought of as a form of detailed requirements and developer tests as detailed design. Having tests do “double duty” like this is a perfect example of single sourcing information, a practice which enables developers to travel light and reduce overall documentation. However, detailed specification is only part of the overall picture – high-level specification is also critical to your success, when it’s done effectively. This is why we need to go beyond TDD to consider AMDD.

7. Your Goal is To Effectively Implement Requirements, Not Document Them

Too many teams are crushed by the overhead required to develop and maintain comprehensive documentation and traceability between it. Take an agile approach to documentation and keep it lean and effective. The most effective documentation is just barely good enough for the job at hand. By doing this, you can focus more of your energy on building working software, and isn’t that what you’re really being paid to do?

|

8. Recognize That You Have a Wide Range of Stakeholders

End users, either direct or indirect, aren’t your only stakeholders. Other stakeholders include managers of users, senior managers, operations staff members, the “gold owner” who funds the team, support (help desk) staff members, auditors, your program/portfolio manager, developers working on other systems that integrate or interact with the one under development, or maintenance professionals potentially affected by the development and/or deployment of a software solution.

To name a few. These people aren’t going to agree with one another, they’re going to have different opinions, priorities, understandings of what they do, understandings of what others do, and visions for what the system should (or shouldn’t) do. The implication is that you’re going to need to recognize that you’re in this situation and then act accordingly.

Figure 6 shows how agile teams typically have someone in a stakeholder representative role, this role is called product owner in Scrum, whom they go to as the official source of information and prioritization decisions. This works well for the development team, but essentially places the burden on the shoulders of this person. Anyone in this role will need to:

- Have solid business analysis skills, particularly in negotiation, diplomacy, and requirements elicitation.

- Educate the team in the complexity of their role.

- Be prepared to work with other product owners who are representing the stakeholder community on other development teams. This is particularly true at scale with large agile teams.

- Recognize that they are not an expert at all aspects of the domain. Therefore, they will need to have good contacts within the stakeholder community and be prepared to put the development team in touch with the appropriate domain experts on an as-needed basis so that they can share their domain expertise with the team.

Figure 6. You’ll work with a range of stakeholders.

9. Platform Independent Requirements to a Point

I’m a firm believer that requirements should be technology independent. I cringe when I hear terms such as object-oriented (OO) requirements, structured requirements, or component-based requirements. The terms OO, structured, and component-based are all categories of implementation technologies, and although you may choose to constrain yourself to technology that falls within one of those categories the bottom line is that you should just be concerned about requirements. That’s it, just requirements. All of the techniques that I describe below can be used to model the requirements for a system using any one (or more) of these categories.

However, I also cringe when I hear about people talk about Platform Independent Models (PIMs), part of the doomed Model Driven Architecture (MDA) vision from the Object Management Group (OMG). Few organizations are ready for the MDA, so my advice is to not let the MDA foolishness distract you from becoming effective at requirements modeling.

Sometimes you must go away from the ideal of identifying technology-independent requirements. For example, a common constraint for most teams is to take advantage of the existing technical infrastructure wherever possible. At this level the requirement is still technology independent, but if you drill down into it to start listing the components of the existing infrastructure, such as your Sybase vX.Y.Z database or then need to integrate with a given module of SAP R/3, then you’ve crossed the line. This is okay as long as you know that you are doing so and don’t do so very often.

10. Smaller is Better

Remember to think small. Smaller requirements, such as features and user stories, are much easier to estimate and to build to than are larger requirements, such as use cases. An average use case describes greater functionality than the average user story and is thus considered “larger”. They’re also easier to prioritize and therefore manage.

11. Question Traceability

Think very carefully before investing in a requirements traceability matrix, or in full lifecycle traceability in general, where the traceability information is manually maintained. Traceability is the ability to relate aspects of artifacts to one another, and a requirements traceability matrix is the artifact that is often created to record these relations – it starts with your individual requirements and traces them through any analysis models, architecture models, design models, source code, or test cases that you maintain.

My experience is that organizations with traceability cultures will often choose to update artifacts regularly, ignoring the practice Update Only When it Hurts, so as to achieve consistency between the artifacts (including the matrix) that they maintain. They also tend to capture the same information in several places, often because they employ overly specialized people who “hand off” artifacts to other specialists in a well-defined, Tayloristic process. This is not traveling light. Often, a better approach is to single source information and to build teams of generalizing specialists.

The benefits of having such a matrix is that it makes it easier to perform an impact analysis pertaining to a changed requirement because you know what aspects of your system will be potentially affected by the change. However, in simple situations (e.g with small co-located teams addressing a fairly straightforward situation) when you have one or more people familiar with the system, then it is much easier and cheaper to simply ask them to estimate the change. Furthermore, if a continuous integration strategy is in place it may be simple enough to make the change and see what you broke, if anything, by rebuilding the system. In simple situations, which many agile teams find themselves in, my experience is that traceability matrices are highly overrated because the total cost of ownership (TCO) to maintain such matrices, even if you have specific tools to do so, far outweigh the benefits. Make your stakeholders aware of the real costs and benefits and let them decide – after all, a traceability matrix is effectively a document and is therefore a business decision to be made by them. If you accept the AM principle Maximize Stakeholder ROI, if you’re honest about the TCO of traceability matrices, then they often prove to be superflorous.

When does maintaining traceability information make sense? The quick answer is in some complex situations and when you have proper tooling support:

- Automated tooling support exists. Some development tools will automatically provide most of your traceability needs as a side effect of normal development activities. You may still need to do a bit of extra work here and there to achieve full traceability, but a lot of the traceability work can and should be automated. With automated tooling the TCO of traceability drops, increasing the chance that it will provide real value to your effort.

- Complex domains. When you find yourself in a complex situation, perhaps you’re developing a financial processing system for a retail bank or a logistics system for a manufacturer, then the need for traceability is much greater to help deal with that complexity.

- Large teams or geographically distributed teams. Although team size is typically motivated by greater complexity — domain complexity, technical complexity, or organizational complexity — the fact is that there is often a greater need for traceability when a large team is involved because it will be difficult for a even the most experienced of team members to comprehend the detailed nuances of the solution. The implication is that you will need the type of insight provided by traceability information to effectively assess the impact of proposed changes. Note that large team size and geographic distribution have a tendency to go hand-in-hand.

- Regulatory compliance. Sometimes you have actual regulatory compliance needs, for example the Food and Drug Administration’s CFR 21 Part 11 regulations requires it, then clearly you need to conform to those regulations.

In short, I question manually maintained traceability which is solely motivated by “it’s a really good idea”, “we need to justify the existence of people on the CCB” (it’s rarely worded like that, but that’s the gist of it), or “CMMI’s Requirements Management process area requires it”. In reality there’s lots of really good ideas out there with much better ROI, surely the CCB members could find something more useful to do, and there aren’t any CMMI police so don’t worry about it. In short, just like any other type of work product, you should have to justify the creation of a traceability matrix.

|

12. Explain the Techniques

Everyone should have a basic understanding of a modeling technique, including your stakeholders. They’ve never seen CRC cards before? Take a few minutes to explain what they are, why you are using them, and how to create them. You cannot follow the practice Active Stakeholder Participation if your stakeholders are unable to work with the appropriate modeling techniques.

13. Adopt Stakeholder Terminology

Do not force artificial, technical jargon onto your stakeholders. They are the ones that the system is being built for, therefore, it is their terminology that you should use to model the system. As Constantine and Lockwood say, avoid geek-speak. An important artifact on many teams is a concise glossary of business terms.

14. Keep it Fun

Modeling doesn’t have to be an arduous task. In fact, you can always have fun doing it. Tell a few jokes, and keep your modeling efforts light. People will have a better time and will be more productive in a “fun” environment.

15. Obtain Management Support

Investing the effort to model requirements, and in particular applying agile usage-centered design techniques, are new concepts to many organizations. An important issue is that your stakeholders are actively involved in the modeling effort, a fundamental culture change for most organizations. As with any culture change, without the support of senior management you likely will not be successful. You will need support from both the managers within you IS (information system) department and within the user area.

16. Turn Stakeholders Into Developers

An implication of this approach is that your stakeholders are learning fundamental development skills when they are actively involved with a software team. It is quite common to see users make the jump from the business world to the technical world by first becoming a business analyst and then learning further development skills to eventually become a full-fledged developer. My expectation is that because agile software development efforts have a greater emphasis on stakeholder involvement than previous software development philosophies we will see this phenomena occur more often – keep a look out for people wishing to make this transition and help to nurture their budding development skills. You never know, maybe some day someone will help nurture your business skills and help you to make the jump out of the technical world.